Web crawling detection

Web crawling detection

Web crawlers or web-spiders are software which do web crawling from one page to another via the connecting hyperlink (links) on the pages. The most known and famous crawler is Google Bot. While Website crawlers are known since internet has been available, it was the search bots which got them attention.

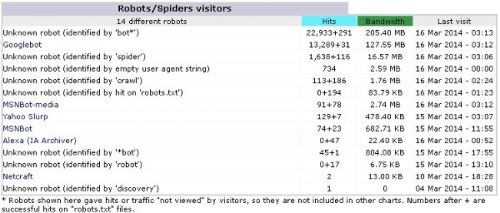

Search bots crawl pages and collects url and its page for showing them in their search results after indexing them. Soon there were spam bots which crawl internet to harvest emails for spamming them or post automated back-links in non secured forms. As social media became popular, website crawlers developed into getting profile data from websites. Today there are more web crawlers in internet than actual humans ! While search bot may be good in some sense, other crawlers just suck a website bandwidth. In this post, lets see how we can detect these web crawlers and prevent web crawlers from wasting your bandwidth limit.

Web crawler detection

While some website crawlers do have legitimate user-agent set, most don't and spoof it. Initially I used to monitor my server log files and used to block them individually, it became very cumbersome. Also some web crawlers which are not frequent visitors may not be identified at all !

To detect most web crawlers I did following

- Web crawler trap or bot trap

Most good crawlers will comply by robots.txt and nofollow link hence will not access pages they are not authorized. I created PHP Page which will log down the details of such crawler and linked it from every page with rel="nofollow". Since bad bot/ crawlers will still crawl it, I get their details now. PHP code below//Detect if web crawler is using proxy

function getUserIP()

{

$client = @$_SERVER['HTTP_CLIENT_IP'];

$forward = @$_SERVER['HTTP_X_FORWARDED_FOR'];

$remote = $_SERVER['REMOTE_ADDR'];

if(filter_var($client, FILTER_VALIDATE_IP)){$ip = $client;}

elseif(filter_var($forward, FILTER_VALIDATE_IP)){$ip = $forward;}

else{$ip = $remote;}

return $ip;

}

//Do not forget to sanitize as per your need

//Get the page url bot is on (I create hundreds of fake page

$url=$_SERVER['REQUEST_URI'];

//Get web crawlers IP

$IP=$_SERVER['REMOTE_ADDR'];

//Check real IP of crawler (if using proxy)

$realIP=getUserIP();

//Check user agent of bots - helps in finding search bots

$useragent=$_SERVER['HTTP_USER_AGENT'];

//Check from which page crawler is coming if set

$referrer=$_SERVER['HTTP_REFERER'];

//Get host name for real IP if from a server

$host = gethostbyaddr($realIP);

//Store Crawler details hence found in your DB / File

- Hidden links for web crawler

While bot trap will catch website crawlers who do not follow rel="nofollow" or robots.txt, some advanced web crawler may escape that. To catch every web crawler I create fake hyperlinks with style="display:none;". While such links are invisible to humans, web crawler do crawl such links identifying them using same PHP script.

- Particular/suspicious user agent details

- IP range from same crawlers

- Host data to identify the source

- Pages crawled to find bad web crawlers

- Number of pages crawled by particular IP (bandwidth sucked)

- Country of origin of crawlers

- IP range of good search bots

- IP Range of non-English search bots (Chinese Baidu etc.)

- IP Range to block now

PHP TUTORIAL SEO TUTORIAL

PHP TUTORIAL SEO TUTORIAL